By Dakota Winslow and Kanghyun Lee

Github repo: dakotawinslow/hoplite: System for moving AgileX Limo robots in formation

Video: EC535 Final project: Hoplite: Coordinated LIMOBot Swarm Control

Abstract

We present a coordinated multi-robot system using three AgileX LIMO robots fitted with Mecanum wheels for omnidirectional movement and localized with an OptiTrack motion capture system. The robots act as individual agents loosely commanded by a centralized leader, itself directed by user input through a wireless gamepad. The end user directs the swarm through its center-of-mass, allowing the individual robots to direct themselves into position. The motion capture system provides real-time pose information to the entire swarm, enabling both precise movements and collision avoidance. We successfully demonstrated real-time direct control, verified accurate motion capture localization streaming, and created a simulation environment to test swarm behavior. This system lays the groundwork for a fully autonomous formation control framework for small mobile robot teams.

Introduction

As multi-robot systems become increasingly common in autonomous logistics, warehouse automation, and swarm robotics, efficient coordination and communication among robots is essential. However, maintaining formation control across multiple robots in real-time poses significant challenges, especially when the localization system and control logic operate across different versions of the Robot Operating System (ROS).

This project explores the implementation of a small-scale mobile robot swarm using three AgileX LIMO robots. These robots rely on external localization via an OptiTrack motion capture system, which streams robot positions through the Motive software as ROS2 topics. Since the LIMOBots operate on ROS1, a ROS1–ROS2 bridge is required to translate positional data, allowing robots to use MoCAP-based feedback for control.

Our approach uses a leader–follower control architecture, where the leader LIMOBot receives joystick commands, determines the formation center, and computes target positions for follower LIMOBots. The system is designed to maintain fixed formation spacing and simulate coordinated motion, even in the presence of localization delays and communication overhead.

This project builds on concepts from embedded systems, real-time control, and distributed robotics, applying ROS frameworks and motion capture integration in a resource-constrained environment. Our implementation provides a foundation for future extensions such as dynamic formations, autonomous leader behavior, and sensor-based localization fallback systems.

System Architecture and Control Logic

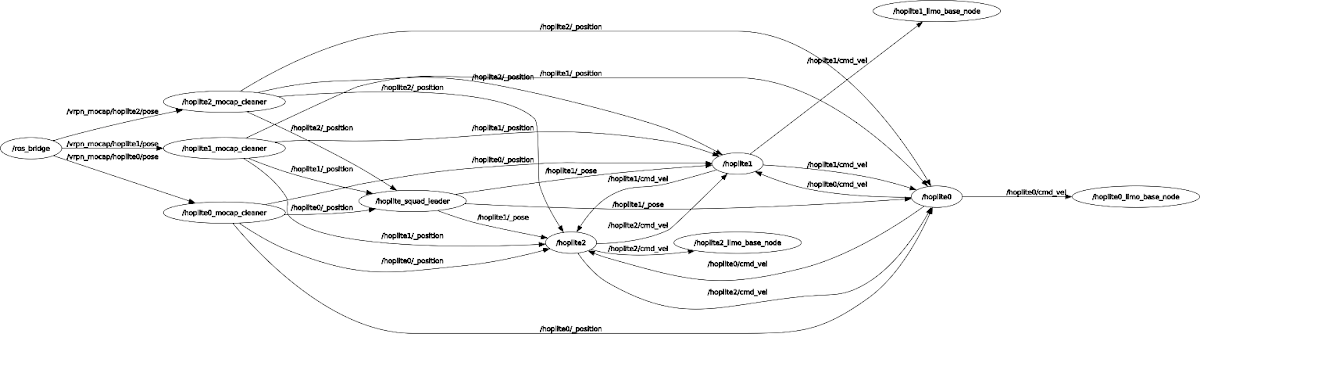

1. High-Level System Overview

The system is designed such that the user controls the swarm through a wireless gamepad connected to any one of the robots. Joystick events are communicated to a control node that integrates the joystick movements into a pose, which was processed by the hoplite leader node to create target poses for each bot in the swarm. Each individual robot then navigates itself to its target pose using potential field navigation to avoid other robots’ positions, as reported by the motion capture system.

2. Joystick Interface for Leader Control

The leader node is controlled by a USB gamepad, which could be connected to any USB- and ROS1-capable computer on the network (we connected it to a robot for simplicity). Joystick and button events are captured by the Linux xpad driver and exposed as a /dev/input/js* device. The device is read by ROS’s off-the-shelf joy node, which emits /joy messages detailing the status of each button and joystick every time the driver reports a change.

These /joy messages are subscribed to by our joystick_control node, which contains an internal pose model. Each time a /joy message is received, the model’s pose is updated and published as a Marker() topic. While we read /joy messages as fast as they came in, we limit traffic on the network by only publishing markers every 0.1 seconds.

3. Squad Leader Command

The squad leader node runs on any computer in the ROS network (for our demo, we used a VM running Ubuntu 18.04 and ROS Melodic). The node reads in the markers from the joystick controller and calculates the target poses for each individual member of the swarm. Our only current positioning mode, regular_polygon_formation(), defines a rigid spacing between robots and places n of them around the central control point such that the spacing between neighbors was fixed, creating regular polygons. Each robot’s facing is set to match the input pose, so all agents face together.

Figure 2: RViz visualization of the robots taking formation. The ‘control point’ is the small green arrow, the target poses for the robots are magenta.

From these calculations, the leader publishes the poses under the topics /hoplite*/_pose (one for each member of the swarm).

4. Individual Hoplite Software

Each robot runs its own stack of control nodes, consisting of a hoplite_soldier, a limo_driver, and a mocap_cleaner.

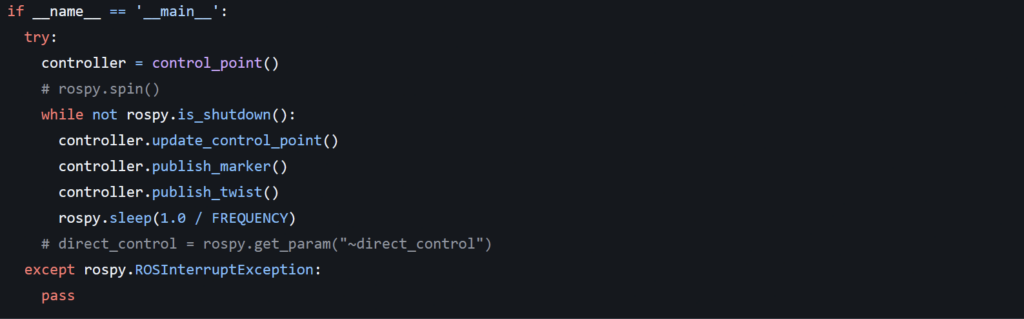

hoplite_soldier

The hoplite_soldier node is responsible for reading target _pose messages from the leader and calculating a path to match that pose. It does so by calculating a movement vector based on a potential field created by an attractor at the target location and repulsors at the outer boundary of each other agent. The system is tuned with heavy dropoff in the repulsion field to allow the robots to work near each other but not collide.

limo_driver

The final corrected velocities are published as Twist messages under the /hoplite*/cmd_vel topic for the limo_driver to read. The limo_driver node serves as a bridge between ROS and the LIMO’s motion platform, which was connected to the Linux system via a serial link. This node was provided as part of the LIMO’s default software package and only modified very slightly to read messages from our custom topic.

mocap_cleaner

The mocap_cleaner node acts as a simple translator to transform the XZY coordinate messages from the motion capture system into XY poses for the robots, which generally only think in 2D. Messages from the motion capture system include movements or rotations in the “up” axis, but we discard these since the robots can not actuate in those axes.

5. Localization via OptiTrack Motion Capture

We use an OptiTrack Motion Capture system to provide each robot with localization data about itself and its peers. OptiTrack uses infrared cameras to track individual retroreflective in known configurations, tracked as rigid bodies. Poses for each of these detected rigid bodies are published to a VRPN server, which interfaces with a ROS2 node that converts the server updates into ROS2 pose messages. These messages are then bridged to our ROS1 network using a special bridge node that connects both networks. This bridge node (as well as the ROS2 VRPN node) runs on a Raspberry Pi located in RASTIC as part of the motion capture infrastructure there.

The motion capture system captures and reports position data at 120 Hz with positional precision under 1 mm, making it more than capable for our system. While we explored data cleaning via Kalman filter or rolling average, neither was found to be necessary, and we safely used the motion capture localization as ground truth for all positional calculations.

Results

Our process was highly iterative, with each incremental success supporting and informing the next. As a result, the project grew very organically, rather than progressing along a pre-established path of goals. At the point of evaluation, we were able to place three robots in arbitrary positions within the RASTIC MoCap arena. When the system was started, these three robots determined their own locations in 2D space and moved themselves to orient with the start position, defined as a triangle around the central origin of the room pointing East. The robots then moved in response to inputs from the gamepad, maintaining their formation and returning to it if perturbed. The movements of the robots were smooth and free of resonance or oscillation, thanks to the PD controller.

Limitations and Future Work

The system, while functional, is far from perfect. The collision avoidance system tends to cause the robots to move very slowly any time they might collide. The function scheduling system for the joystick_control python node prioritizes joystick input response over publishing marker outputs, meaning that as long as a controller stick is held down, the robots do not receive updated commands. However, despite these shortcomings, the system is generally functional, smooth, and presents a solid base upon which to develop additional functionality.

We see a future for the system as a functional semi-autonomous lab assistant, capable of moving in concert with systems being tested. With the on-board cameras, a hoplite squad could capture 360-degree video of a robot moving for further analysis. With arms attached to their chassis, the robots could serve as a moving support platform for unsteady walking robots or hold targets for other robots to attempt to contact. Additionally, other formations could be explored, creating a system of robots capable of moving tools and equipment around during lab restructuring or moving in concert to support large loads.

Bibliography

https://github.com/agilexrobotics/limo-doc/blob/master/Limo%20user%20manual(EN).md

The official manual for AgileX LIMO Robots. This github repository also contains links to source code for the robot itself.

https://limo-agx.github.io/index.html

This unofficial guide has additional helpful information about the LIMOs, including how to return them to their factory default settings (very helpful for RASTIC bots which are in an unknown state).

https://www.baillieul.org/Robotics/agitr-letter.pdf

Excellent primer to the world of ROS.

https://www.sciencedirect.com/science/article/pii/S1474667015404616

https://link.springer.com/article/10.1007/s11721-013-0089-4

3 Papers on the topic of swarm navigation and collision avoidance. Unfortunately we were unable to make use of the techniques described in these papers due to time constraints, but these would be the starting point for a continued project.